Load Balancing: An Overview and Key Algorithms

EP04 | System Design | HLD Series | #02

In today's world of highly scalable and distributed systems, managing server load efficiently is crucial to ensure high availability, performance, and reliability. This is where load balancing comes into play. It refers to the process of distributing network or application traffic across multiple servers to ensure that no single server is overwhelmed. Load balancing helps prevent downtime, reduce latency, and optimize resource use.

This article will cover:

What Load Balancing is.

Different Load Balancing Algorithms.

Visual diagrams for each algorithm.

What is Load Balancing?

Load balancing ensures that workloads are evenly distributed across multiple servers or resources to prevent bottlenecks and failures. A load balancer is typically placed between the user and backend servers. Based on the chosen algorithm, it decides how traffic should be distributed.

There are two types of load balancers:

Layer 4 (Transport Layer): Operates at the transport layer (TCP/UDP) to direct traffic based on IP addresses and ports.

Layer 7 (Application Layer): Works at the application layer (HTTP/HTTPS), directing traffic based on application-level information (e.g., URL, headers).

Key Load Balancing Algorithms

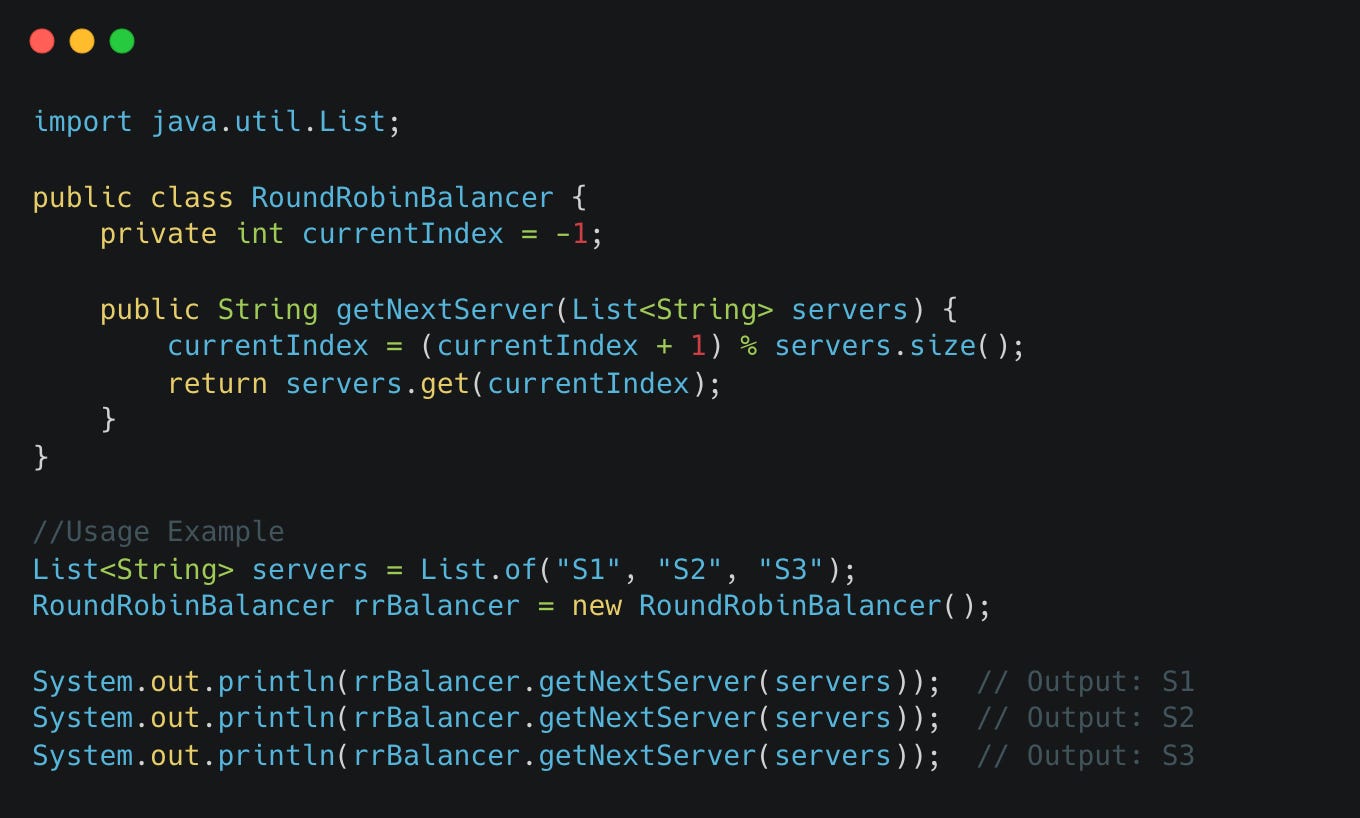

1. Round Robin Algorithm

The Round Robin algorithm is one of the simplest load-balancing techniques. It distributes client requests sequentially across all servers in a circular manner. When the last server in the list receives a request, the process loops back to the first server.

When to Use:

Homogeneous server environments where each server has the same capacity.

Situations where the traffic load is relatively stable and consistent.

Advantages:

Simple to implement: Requires minimal configuration.

Uniform distribution: Ensures that traffic is evenly spread across servers.

Disadvantages:

Ignores server capacity: It assumes that all servers have the same processing power, which may lead to overloaded servers if capacities differ.

No connection persistence: Users may end up on different servers during consecutive requests, leading to potential session issues.

Code Implementation

2. Weighted Round Robin Algorithm

A variation of Round Robin, the Weighted Round Robin algorithm assigns weights to servers based on their capacity. A server with a higher weight receives more requests compared to one with a lower weight.

When to Use:

Heterogeneous environments where servers have different capacities.

Workloads that require differentiated handling based on server capabilities.

Advantages:

Flexibility: Allows for better use of high-capacity servers by assigning them more traffic.

Predictable: Requests are distributed based on predefined weights, ensuring that powerful servers handle more load.

Disadvantages:

Weight assignment complexity: Deciding on proper weights can be tricky, especially as traffic patterns change.

Static weights: If server performance changes over time, manual adjustment is required.

Code Implementation

3. Least Connections Algorithm

In this algorithm, the load balancer routes traffic to the server with the least active connections. This ensures that the server with the least workload handles more requests, preventing overload.

When to Use:

Environments with varying traffic loads and long-running sessions.

Ideal when the workload is unpredictable, and some requests consume more server resources than others.

Advantages:

Dynamic load balancing: Adapts to real-time traffic loads, distributing requests to the least-burdened server.

Efficient for long-lived connections: Ensures that servers with fewer active connections get more requests.

Disadvantages:

Ignores server performance: May still overload underperforming servers, as it doesn't account for the server's capacity or response times.

Slower decision-making: Keeping track of active connections might introduce overhead.

Code Implementation

4. IP Hash Algorithm

The IP Hash algorithm distributes requests based on the client's IP address. A hash function calculates a value based on the client’s IP, which is then used to assign the client to a specific server. This approach ensures that the same client is directed to the same server consistently.

When to Use:

Situations requiring session persistence or sticky sessions (e.g., e-commerce, chat applications).

Environments where you want to ensure that the same user is always routed to the same server.

Advantages:

Ensures session persistence: A specific client always connects to the same server, which is essential for session continuity.

Scalability: Works well with large-scale distributed applications that need user affinity.

Disadvantages:

Not balanced for server load: May lead to unbalanced loads if certain clients generate significantly more requests than others.

Limited flexibility: Server reassignments are challenging if one server becomes overloaded.

Code Implementation

5. Least Response Time Algorithm

This algorithm considers both the number of active connections and the response time of the servers. The server with the least number of active connections and the fastest response time will be assigned the next request.

When to Use:

Environments where real-time response time is critical (e.g., low-latency applications, real-time trading platforms).

Applications with varying response times and fluctuating workloads.

Advantages:

Highly adaptive: Considers both active connections and server response time, leading to optimized resource allocation.

Reduces latency: Requests are routed to servers with the fastest response times, improving user experience.

Disadvantages:

High overhead: Monitoring response times and active connections adds processing overhead to the load balancer.

Complex to implement: Requires more sophisticated infrastructure to track response times dynamically.

Code Implementation

6. Random Algorithm

As the name suggests, the Random algorithm assigns requests to servers randomly. This is often used as a simple approach when no other specific factors are considered.

When to Use:

Homogeneous environments where server performance is identical and there’s no need for sophisticated load-balancing logic.

Scenarios where simplicity is more important than optimization.

Advantages:

Simple and fast: No need to maintain any state about the servers or connections.

Low overhead: Since it's random, there's no performance hit from tracking connections or server status.

Disadvantages:

Inefficient: Can result in poor load distribution, especially if some servers handle more requests than others by chance.

No adaptability: Doesn’t account for current load, leading to possible overloading of servers.

Code Implementation

Conclusion

The choice of algorithm should be based on the nature of your application, the homogeneity of your servers, and the traffic patterns. For high-traffic applications where performance and user experience matter, sophisticated algorithms like Least Connections or Least Response Time can make a significant difference. However, in simpler scenarios with equal server capacities, Round Robin or Random can serve well.

By understanding these algorithms and their trade-offs, you can optimize your load-balancing strategy to match your system's needs. Whether your system deals with millions of requests or a smaller, predictable workload, a load balancer ensures smooth operation by distributing traffic efficiently.